INNOVATION

Issue 44: Winter 2026

AI-powered hearing aids and listening effort: Promoting cognitive health in aging

Partner to Innovate

AI-powered hearing aids and listening effort: Promoting cognitive health in aging

For millions of Canadians, hearing loss is a daily challenge. Statistics Canada reports that about 40 per cent of adults aged 20 to 79 have some level of hearing loss, which worsens with age. In noisy places like restaurants, offices or family gatherings, following a conversation can be mentally exhausting, even for people who wear hearing aids. Over time, this extra effort can place added strain on the brain, contributing to fatigue, social withdrawal and possibly cognitive decline.

Using AI to make listening easier

Traditional hearing aids amplify sound, but they often struggle to separate speech from background noise. New research from Toronto Metropolitan University (TMU) suggests that artificial intelligence (AI) could help solve this problem and ease brain effort while listening. In a study conducted with industry partner Sonova, a global hearing aid manufacturer, psychology professor Frank Russo and his team tested hearing aids that use a deep neural network – a form of AI inspired by how the brain processes information.

In the study, experienced hearing-aid users completed listening tests with noise present, both with the AI system on and off. When the AI was activated, participants understood speech more clearly and reported that listening felt easier. Speech accuracy improved by 10-15 per cent, while listening effort dropped by a similar amount.

“People with age-related hearing loss often feel exhausted after social interactions,” said professor Russo. “By reducing listening effort, AI-powered hearing aids can help them stay engaged and reduce cognitive fatigue."

A new window into the brain’s listening effort

To study how AI-powered hearing aids affect the brain’s effort to listen, the research team needed a method that overcame the challenges of traditional imaging techniques – MRI scans can’t be used with metal-containing devices, and other methods of measuring brain activity can be disrupted by the electromagnetic fields emitted by the hearing aids themselves.

The answer? Functional near-infrared spectroscopy (fNIRS). While participants wore the hearing aids and listened in realistic conditions, harmless light was shone through their scalps to track changes in their blood oxygen levels – a signal of how hard different parts of their brain are working. When the AI system was on, the brain data showed reduced activity in the left prefrontal cortex, an area of the brain that works harder when listening becomes difficult.

“This reduction in prefrontal activity suggests that the hearing aid is taking on some of the work the brain would otherwise need to do,” professor Russo explained. “That frees up mental resources for other tasks, like grasping meaning, retaining what’s being said, or actively planning what to say next.”

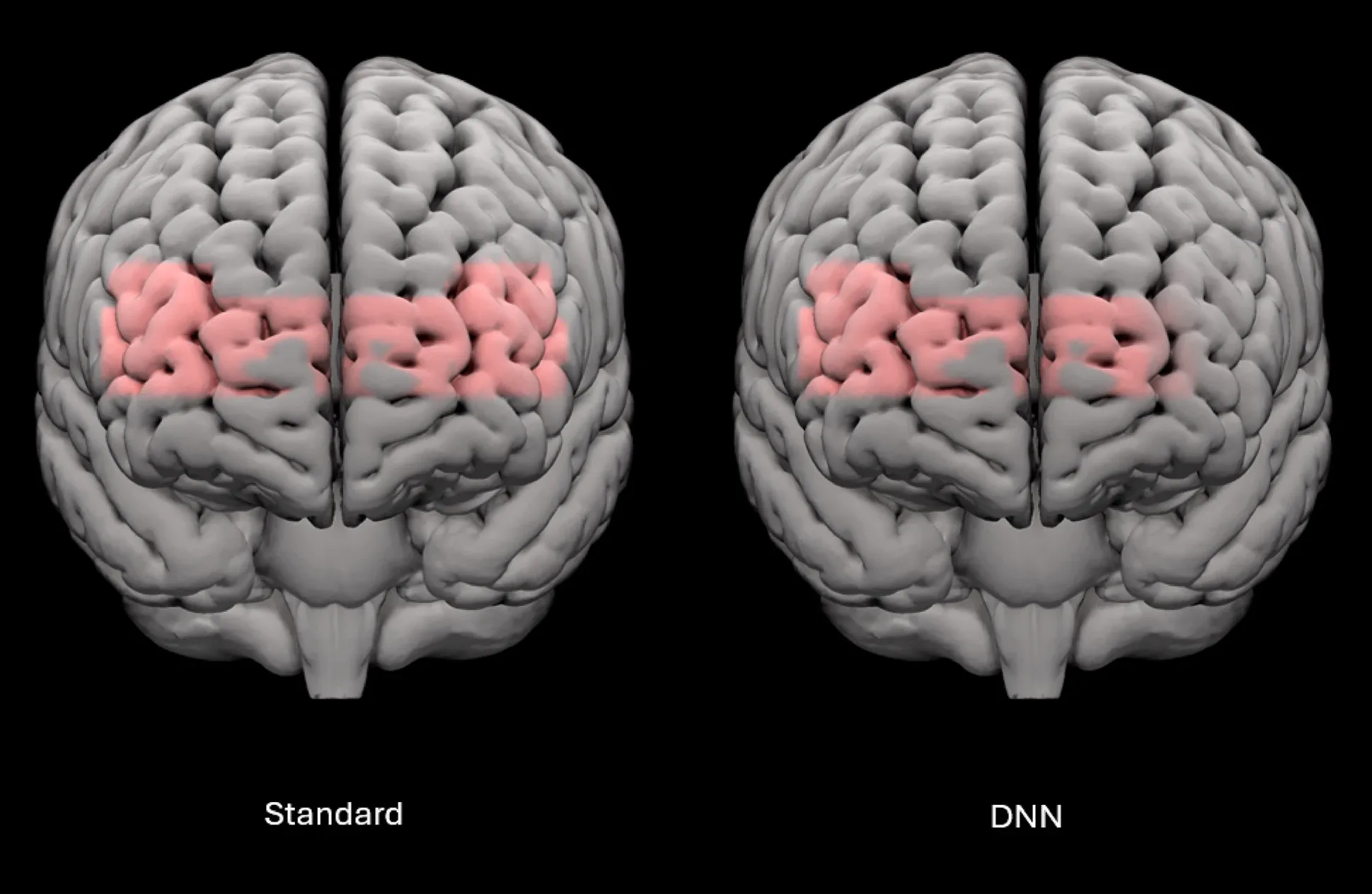

Brain activation during speech-in-noise testing is shown for the standard listening program (left) and the DNN program (right), with shading indicating areas of greater activity compared with baseline.

Turning research into practical hearing solutions

This work builds on more than a decade of research in professor Russo’s SMART Lab and was supported through his NSERC/Sonova Senior Industrial Research Chair in Auditory Cognitive Neuroscience. Sonova’s innovation lay in shrinking the AI, so it ran directly on a chip inside the hearing aid. This allowed the AI system to operate in real time without relying on cloud computing, which can cause delays.

For users, this could mean less cognitive fatigue by the end of the day and greater comfort staying engaged in conversations at work, at home or in social settings.

Looking ahead: Protecting brain health as we age

Professor Russo’s team has identified a group of older adults with hearing loss who show what he calls “neural inefficiency.” In these individuals, the brain works harder during listening, but the extra effort does not improve understanding and may even worsen it.

The next phase of research will examine whether using AI-powered hearing aids earlier can prevent this pattern. In a planned long-term study, people with early-stage hearing loss will be tested before and after two years of hearing-aid use to see whether the technology helps maintain healthy brain function.

“If we can intervene earlier and reduce chronic overuse of these brain regions,” he explained, “we may be able to support healthier listening and potentially contribute to better cognitive outcomes as people age.”

By reducing the mental strain of listening, AI-enhanced hearing aids may help people communicate more easily and support brain health as they age.

People with age-related hearing loss often feel exhausted after social interactions. By reducing listening effort, AI-powered hearing aids can help them stay engaged and reduce cognitive fatigue.