New research to help Government of Canada fight disinformation on social media

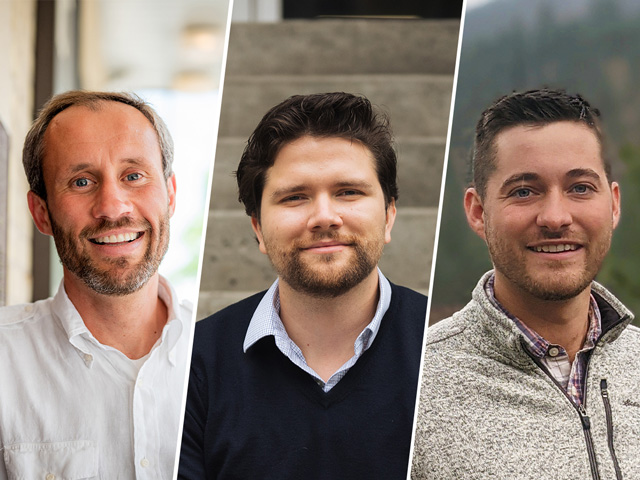

Left to right: Pawel Pralat, Andrei Betlen and David Miller

Like all computer science students, mathematics classes were standard fare for alumni David Miller (Computer Science ’16) and Andrei Betlen (Computer Science ’17). Neither could have imagined that years later, they’d reconnect with their former math professor, Pawel Pralat, on a problem of national importance.

This time, Betlen and Miller are co-founders of data analysis software start-up Patagona Technologies (external link) . Since 2018, they’ve been tackling defence and security challenges under a Department of National Defence innovation program (external link) .

The pair recently pitched a solution to detect hostile influencers who organize campaigns to spread disinformation on social media. Now, they need the mathematics to finish building it. They’ve turned to Pralat, one of the world’s leading experts in network science.

Together, they’re creating first-ever hybrid algorithms that combine two distinct machine learning approaches into a single super-tool — one that simultaneously analyzes user content and underlying structure of social networks. The research expands on Pralat’s prior work in a growing area of data science: graph embeddings.

Ferreting out bots, cyborgs and hostile actors

Correct, incorrect and deliberately misleading information flow freely on social media platforms. Far less obvious is that certain content — sometimes sizable amounts — are created by ’hostile actors’ masquerading as legitimate users.

Behind the curtains may be foreign states, criminals or terrorists. Their goal: manipulate genuine users to advance a particular cause — sway elections, introduce ideologies, undermine public confidence, and so forth.

Their tools of choice: bots (internet robots) and cyborgs (hybrid accounts jointly managed by humans and bots). These automated super-spreaders can propagate ideas faster than humans can consume or properly evaluate them. Distorted perceptions of reality can quickly result.

Patagona Technologies’ product, when completed, will fortify the government’s ability to detect such nefarious activity and counteract disinformation campaigns that threaten national security.

“It’s a very important problem, especially for Canada’s peacekeeping and humanitarian missions,” says Betlen. “We’re providing new tools and technical capabilities to help limit the ability of adversarial nation states and terrorist organizations to disrupt and undermine these missions through social media manipulation.”

An illustration of an embedding of social media users: similar users are embedded close to each other.

New solution: User content + network structure

The main challenge is to develop machine learning algorithms that can differentiate artificial social media accounts from ordinary ones.

Patagona Technologies’ innovation is the first of its kind to do so by integrating two standalone techniques into a single tool. One searches for suspicious content (posts, comments, hashtags); the other, for abnormal network structure (connections, communities, interactions, complex social manoeuvres, etc.).

Embeddings are an integral part of the framework. The technique takes large, complex datasets — such as words in content, or users and connections — and converts them into vectors for easier handling by machine learning models.

Pralat is working with a large team of students and researchers, including Ryerson mathematics PhD student Ash Dehghan and SGH Warsaw School of Economics professor Bogumil Kaminski (external link) on the graph embeddings side.

Pralat elaborates: “Bots and cyborgs behave differently from a network science point of view. The structure around them is different. With graph embeddings, we can assign coordinates to the data and find patterns in how bots operate, regardless of subject matter. Right now, we’re still conducting experiments — including a fun one using data from Marvel comic books to identify villains among other characters.”

Once the role of social media influencers is clearly defined, the framework will include a classifier that analyzes users and predicts hostile actors.

The Future Ahead

The project runs through to next fall. Meanwhile, Betlen and Miller will work with government military experts on feedback for the platform — exciting prospects for two young graduates competing with larger, established firms in the tight Canadian defence space.

Beyond national security, the new platform could also be used in non-governmental contexts. Patagona Technologies is already talking with researchers and organizations that study domestic disinformation about using the tools to harvest insights that could inform Canadian public policy.

Miller reflects on the path he and Betlen have taken since their Ryerson days: “We definitely wouldn’t be here now without the education we got from Ryerson, including experience with start-ups from the Zone Learning ecosystem. Andrei and I have the freedom to explore and go after problems that are incredibly relevant and interesting to us. If we’re still hacking around together in another ten years, that would be great. But we’re very happy with where we’re at right now.”